7. Thermodynamic Feasibility and Sampling of Metabolite Concentrations

This notebook demonstrates how MASSpy is used to ensure thermodynamic feasibility in the metabolite concentrations of a model, and how samples of thermodynamically feasible metabolite concentrations are generated for a model.

[1]:

import matplotlib.pyplot as plt

import numpy as np

import mass.example_data

from mass import MassConfiguration

from mass.thermo import ConcSolver

MASSCONFIGURATION = MassConfiguration()

Note: Throughout this notebook, the term thermodynamic feasibility constraint for a reaction refers to the following:

For a given reaction:

where

\(\textbf{S}\) refers to the stoichiometry of the reaction

\(\textbf{x}\) refers to the vector of concentrations for the reaction metabolites

\(\text{Keq}\) refers to the equilibrium constant of the reaction

\(\text{v}\) refers to the reaction flux.

\(\epsilon\) refers to a buffer value for the constraint.

Based on methods outlined in [KummelPH06] and [HDR13]

7.1. The ConcSolver Object

Upon initialization of the ConcSolver instance, the model becomes associated with the ConcSolver instance. Metabolite concentrations and reaction equilibrium constants are added as variables to the ConcSolver.Thermodynamic feasibility constraints, based on the reaction’s flux direction and stoichiometry, are created and also added to the solver. All solver variables and constraints exist in logarithmic space.

Metabolite concentrations that should be excluded from the solver can be defined using the exclude_metabolites argument (e.g., hydrogen and water). Reactions can also be excluded from the solver using the exclude_reactions argument.

Reactions that should exist at equilibrium or equilibrate very quickly should be set using the equilibrium_reactions argument. These reactions, such as the hemoglobin binding reactions and the adenylate kinase (ADK1) reaction, typically have a steady state flux value of 0.

[2]:

# Load the JSON version of the textbook model

model = mass.example_data.create_example_model("textbook")

Set parameter Username

[3]:

conc_solver = ConcSolver(

model,

excluded_metabolites=["h_c", "h2o_c"],

excluded_reactions=None,

equilibrium_reactions=["HBDPG", "HBO1", "HBO2", "HBO3", "HBO4", "ADK1",

"PFK_L"])

# View the model in the ConcSolver

conc_solver.model

[3]:

| Name | RBC_PFK |

| Memory address | 0x07fdb2a96ce90 |

| Stoichiometric Matrix | 68x76 |

| Matrix Rank | 63 |

| Number of metabolites | 68 |

| Initial conditions defined | 68/68 |

| Number of reactions | 76 |

| Number of genes | 0 |

| Number of enzyme modules | 1 |

| Number of groups | 16 |

| Objective expression | 0 |

| Compartments | Cytosol |

The ConcSolver also becomes associated with the loaded model.

[4]:

print(model.conc_solver)

<ConcSolver RBC_PFK at 0x7fdb2a95ef10>

Concentrations and equilibrium constants cannot be negative numbers; therefore, the bounds for each variable are set to ensure such behavior. Because \(\ln(0)\) results in a domain error, the ConcSolver has the zero_value_log_substitute attribute. The value of the attribute is substituted for 0 to avoid any errors.

For example, if zero_value_log_substitute=1e-10, then taking the logarithm of 0 is treated as \(\ln(0) \approx \ln(1*10^{-10}) = -23.026\).

[5]:

print("Substitute for ln(0): ln({0:.1e})".format(

conc_solver.zero_value_log_substitute))

Substitute for ln(0): ln(1.0e-10)

Variables can be accessed through the variables attribute. The number of variables equals the combined total of the number of included metabolites and the number of included reactions. Specific variables can be accessed using their identifiers as a key.

[6]:

print("Number of included metabolites: {0}".format(len(conc_solver.included_metabolites)),

"\nNumber of included reactions: {0}".format(len(conc_solver.included_reactions)),

"\nTotal number of variables: {0}\n".format(len(conc_solver.variables)))

# Access the glucose concentration variable

variable = conc_solver.variables["glc__D_c"]

print("The glucose concentration variable",

"\n----------------------------------\n",

variable)

Number of included metabolites: 66

Number of included reactions: 48

Total number of variables: 114

The glucose concentration variable

----------------------------------

-23.025850929940457 <= glc__D_c <= inf

Constraints can be accessed through the constraints attribute. The number of constraints equals the number of included reactions. Just like variables, specific constraints can be accessed using reaction identifiers as a key.

[7]:

print("Total number of constraints: {0}\n".format(len(conc_solver.constraints)))

# Access the hexokinase thermodynamic feasibility constraint

print("Thermodynamic feasibility constraint for HEX1",

"\n-------------------------------------------\n",

conc_solver.constraints["HEX1"])

Total number of constraints: 48

Thermodynamic feasibility constraint for HEX1

-------------------------------------------

HEX1: -1.0*Keq_HEX1 + 1.0*adp_c - 1.0*atp_c + 1.0*g6p_c - 1.0*glc__D_c <= 0

Currently, the constraints do not have an error buffer, which provides some flexibility when solving the underlying mathematical problem of the ConcSolver. The constraint_buffer attribute can be used to set the epsilon value of the constraint. The constraints must be reset in order for the changed buffer value to take effect.

[8]:

conc_solver.constraint_buffer = 1e-7

conc_solver.reset_constraints()

print("Thermodynamic feasibility constraint for HEX1",

"\n-------------------------------------------\n",

conc_solver.constraints["HEX1"])

Thermodynamic feasibility constraint for HEX1

-------------------------------------------

HEX1: -1.0*Keq_HEX1 + 1.0*adp_c - 1.0*atp_c + 1.0*g6p_c - 1.0*glc__D_c <= -1e-07

Upon initialization of the ConcSolver, the ConcSolver.problem_type is considered generic and no objective is set.

[9]:

print(conc_solver.problem_type)

print(conc_solver.objective)

generic

Maximize

0

The following sections demonstrate different types of problems that can be solved using the ConcSolver.

7.2. Solving for Feasible Concentrations

7.2.1. Creating the QP problem

In order to determine thermodynamically feasible concentrations, a quadratic programming (QP) problem can be set up as follows:

Minimize

subject to

where

\(\textbf{S}\) refers to the stoichiometric matrix.

\(\textbf{x}\) refers to the vector of metabolite concentrations.

\(\textbf{x}_0\) refers to the vector of initial metabolite concentrations.

\(\text{Keq}_i\) refers to the equilibrium constant of reaction \(i\).

\(\text{v}_i\) refers to the flux for reaction \(i\).

\(\text{x}_j\) refers to the concentration of metabolite \(j\).

\(\epsilon\) refers to a buffer value for the constraint.

Note that solving the QP problem requires a capable solver. Although MASSpy does not come with any QP solvers installed, it can interface with an installed version of gurobi through the optlang package.

The first step is to set the optimization solver to one that is capable of handling quadratic objectives.

[10]:

conc_solver.solver = conc_solver.choose_solver(qp=True)

print(repr(conc_solver.solver))

<optlang.gurobi_interface.Model object at 0x7fdb2a95efd0>

To set up the underlying mathematical problem in the ConcSolver, the setup_feasible_qp_problem() method can be used. The fixed_conc_bounds and fixed_Keq_bounds arguments can be used to set the upper and lower bounds of the corresponding variables equal to one other, fixing the variable’s value. In this example, the metabolite concentrations are allowed to change, while the equilibrium constants are fixed at their original value.

[11]:

conc_solver.setup_feasible_qp_problem(

fixed_Keq_bounds=conc_solver.model.reactions)

Using the setup_feasible_qp_problem() method also sets the objective for the optimization.

[12]:

print(conc_solver.objective_direction)

conc_solver.objective

min

[12]:

<optlang.gurobi_interface.Objective at 0x7fdb79557790>

After using the setup_feasible_qp_problem() method, the ConcSolver is ready for optimization. The problem_type is automatically changed to reflect the current problem setup.

[13]:

print(conc_solver.problem_type)

feasible_qp

7.2.2. The ConcSolution Object

Once the ConcSolver is set up to solve the QP, the next step is to use the optimize() method to solve the QP. A successful optimization returns a ConcSolution object. All values are transformed back into linear space upon being returned.

[14]:

conc_solution = conc_solver.optimize()

conc_solution

[14]:

| variables | reduced_costs | |

|---|---|---|

| glc__D_c | 1.296763 | 0.000000 |

| g6p_c | 0.165018 | 0.000000 |

| f6p_c | 0.067532 | 0.000000 |

| fdp_c | 0.016615 | 0.000000 |

| dhap_c | 0.169711 | 0.000000 |

| ... | ... | ... |

| Keq_PFK_L | 0.001100 | -0.103955 |

| Keq_PFK_T1 | 10.000000 | -1.097455 |

| Keq_PFK_T2 | 10.000000 | -0.408917 |

| Keq_PFK_T3 | 10.000000 | 0.000000 |

| Keq_PFK_T4 | 10.000000 | 0.000000 |

114 rows × 2 columns

The ConcSolution object has several methods for viewing the results of the optimization and returning pandas objects containing the numerical solutions.

[15]:

dir(conc_solution)

[15]:

['Keq_reduced_costs',

'Keqs',

'Keqs_to_frame',

'concentration_reduced_costs',

'concentrations',

'concentrations_to_frame',

'get_primal_by_id',

'objective_value',

'shadow_prices',

'status',

'to_frame']

[16]:

from mass.visualization import plot_comparison

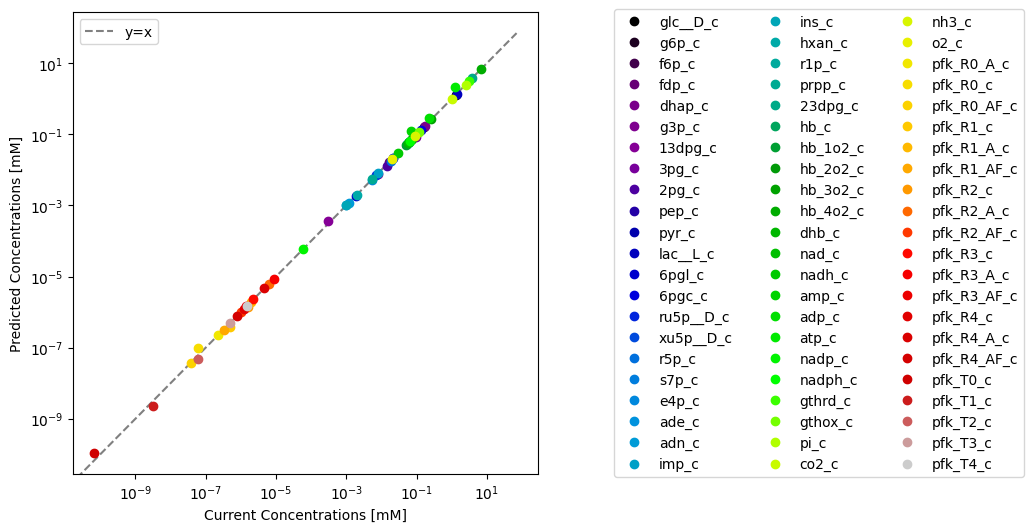

Through visualization features of MASSPy, the predicted values can be plotted against the original model values for comparison using the plot_comparison() function.

[17]:

# Create figure

fig, ax = plt.subplots(figsize=(6, 6))

# Compare values

plot_comparison(

x=model, y=conc_solution, compare="concentrations",

observable=conc_solver.included_metabolites, legend="right outside",

xlabel="Current Concentrations [mM]",

ylabel="Predicted Concentrations [mM]",

plot_function="loglog", xy_line=True, xy_legend="best");

The model in the ConcSolver can be updated with the results contained within the ConcSolution using the update_model_with_solution() method. Setting inplace=True updates the current model in the ConcSolver, while setting inplace=False replaces the model in the ConcSolver with an updated copy the model without modifying the original. Setting inplace=False also removes the previous model’s association with the ConcSolver.

[18]:

conc_solver.update_model_with_solution(

conc_solution, concentrations=True, Keqs=False, inplace=False)

print("Same model object? {0}".format(conc_solver.model == model))

print(model.conc_solver)

Same model object? False

None

7.3. Concentration Sampling

7.3.1. Basic usage

The easiest method of sampling concentrations is to use the sample_concentrations() function in the conc_sampling submodule.

[19]:

from mass.thermo.conc_sampling import sample_concentrations

To set up the ConcSolver for sampling, the setup_sampling_problem method is used. The conc_percent_deviation and Keq_percent_deviation arguments can be used to set the variable bounds for sampling. For this example, the defined concentrations are allowed to deviate up to %75 from their baseline value, while the defined equilibrium constants remain fixed at their current values.

[20]:

conc_solver.setup_sampling_problem(

conc_percent_deviation=0.75,

Keq_percent_deviation=0)

print(conc_solver.problem_type)

sampling

Using the sample_concentrations() function requires at least two arguments: a ConcSolver that has been set up for sampling, and the number of samples to generate.

[21]:

samples = sample_concentrations(conc_solver, n=20)

samples.head()

[21]:

| glc__D_c | g6p_c | f6p_c | fdp_c | dhap_c | g3p_c | _13dpg_c | _3pg_c | _2pg_c | pep_c | ... | pfk_R3_A_c | pfk_R3_AF_c | pfk_R4_c | pfk_R4_A_c | pfk_R4_AF_c | pfk_T0_c | pfk_T1_c | pfk_T2_c | pfk_T3_c | pfk_T4_c | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 1.596713 | 0.210179 | 0.086054 | 0.020991 | 0.157050 | 0.008665 | 0.000595 | 0.109797 | 0.016130 | 0.027321 | ... | 0.000002 | 3.656518e-07 | 3.054477e-07 | 0.000001 | 1.940653e-07 | 3.720077e-11 | 6.899861e-10 | 1.257736e-08 | 1.240015e-07 | 3.823113e-07 |

| 1 | 1.214239 | 0.155950 | 0.059334 | 0.014813 | 0.098487 | 0.005100 | 0.000364 | 0.057615 | 0.008335 | 0.013158 | ... | 0.000005 | 7.403827e-07 | 7.002685e-07 | 0.000003 | 5.186616e-07 | 1.202555e-10 | 1.241397e-09 | 1.452112e-08 | 2.186275e-07 | 6.025745e-07 |

| 2 | 1.556434 | 0.183490 | 0.074707 | 0.016579 | 0.079665 | 0.004008 | 0.000373 | 0.054313 | 0.007880 | 0.010496 | ... | 0.000008 | 1.239471e-06 | 1.346049e-06 | 0.000005 | 7.576529e-07 | 9.695340e-11 | 1.035304e-09 | 1.670452e-08 | 2.482744e-07 | 6.245709e-07 |

| 3 | 1.236665 | 0.146335 | 0.058533 | 0.006160 | 0.046322 | 0.002591 | 0.000291 | 0.033543 | 0.004064 | 0.005429 | ... | 0.000004 | 6.050883e-07 | 6.328371e-07 | 0.000003 | 4.192992e-07 | 7.414760e-11 | 7.697433e-10 | 1.314203e-08 | 2.033830e-07 | 5.776471e-07 |

| 4 | 2.072819 | 0.239930 | 0.085145 | 0.008354 | 0.061132 | 0.003399 | 0.000313 | 0.043632 | 0.005063 | 0.007502 | ... | 0.000004 | 4.141317e-07 | 5.283614e-07 | 0.000002 | 4.392457e-07 | 5.786002e-11 | 8.159291e-10 | 1.393734e-08 | 2.506431e-07 | 7.495134e-07 |

5 rows × 66 columns

By default sample_concentrations uses the optgp method [MHM14], as it is suited for larger models and can run in parallel. The number of processes can be changed by using the processes argument.

[22]:

print("One process:")

%time samples = sample_concentrations(conc_solver, n=1000, processes=1)

print("\nTwo processes:")

%time samples = sample_concentrations(conc_solver, n=1000, processes=2)

One process:

CPU times: user 11.1 s, sys: 682 ms, total: 11.8 s

Wall time: 10.8 s

Two processes:

CPU times: user 391 ms, sys: 200 ms, total: 592 ms

Wall time: 5.62 s

Alternatively, the Artificial Centering Hit-and-Run for sampling [KS98] can be utilized by setting the method to achr. The achr method does not support parallel execution, but it has good convergence and is almost Markovian.

[23]:

samples = sample_concentrations(conc_solver, n=100, method="achr")

In general, setting up the sampler is expensive since initial search directions are generated by solving many linear programming problems. Thus, it is recommended to generate as many samples as possible in one go. However, generating large numbers of samples might require finer control over the sampling procedure, as described in the following section.

7.3.2. Advance usage

7.3.2.1. Sampler objects

The concentration sampling process can be controlled on a lower level by using the sampler classes directly, found in the conc_sampling submodule.

[24]:

from mass.thermo.conc_sampling import ConcACHRSampler, ConcOptGPSampler

Both concentration sampler classes have standardized interfaces and take some additional arguments.

For example, one such argument is the thinning factor, where “thinning” means only recording samples every x iterations where x is the thinning factor. Higher thinning factors mean less correlated samples but also larger computation times.

By default, the samplers use a thinning factor of 100, which creates roughly uncorrelated samples. Increasing the thinning factor leads to better mixing of samples, while lowering the thinning factor leads to more correlated samples. For example, it may be desirable to set a thinning factor of 1 to obtain all iterates when studying convergence for a model.

Samplers can be seeded so that they produce the same results each time they are run.

[25]:

conc_achr = ConcACHRSampler(conc_solver, thinning=1, seed=5)

samples = conc_achr.sample(10, concs=True)

# Display only the first 5 samples

samples.head(5)

[25]:

| glc__D_c | g6p_c | f6p_c | fdp_c | dhap_c | g3p_c | _13dpg_c | _3pg_c | _2pg_c | pep_c | ... | pfk_R3_A_c | pfk_R3_AF_c | pfk_R4_c | pfk_R4_A_c | pfk_R4_AF_c | pfk_T0_c | pfk_T1_c | pfk_T2_c | pfk_T3_c | pfk_T4_c | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 1.592336 | 0.197568 | 0.078832 | 0.018910 | 0.142081 | 0.007510 | 0.000472 | 0.090709 | 0.013006 | 0.021494 | ... | 0.000003 | 4.536657e-07 | 3.693232e-07 | 0.000001 | 2.228541e-07 | 3.305772e-11 | 6.624519e-10 | 1.327504e-08 | 1.311206e-07 | 3.905462e-07 |

| 1 | 2.248172 | 0.285898 | 0.116922 | 0.004324 | 0.043806 | 0.002030 | 0.000364 | 0.022330 | 0.003282 | 0.005558 | ... | 0.000002 | 3.634455e-07 | 3.032560e-07 | 0.000001 | 1.922307e-07 | 2.714412e-11 | 5.768461e-10 | 1.225869e-08 | 1.236426e-07 | 3.810419e-07 |

| 2 | 2.263896 | 0.288041 | 0.117857 | 0.004522 | 0.045433 | 0.002117 | 0.000370 | 0.023388 | 0.003439 | 0.005827 | ... | 0.000002 | 3.618207e-07 | 3.020507e-07 | 0.000001 | 1.916574e-07 | 2.703623e-11 | 5.752355e-10 | 1.223898e-08 | 1.234960e-07 | 3.808523e-07 |

| 3 | 2.258775 | 0.287343 | 0.117552 | 0.004406 | 0.044485 | 0.002067 | 0.000366 | 0.022777 | 0.003348 | 0.005673 | ... | 0.000002 | 3.623478e-07 | 3.024418e-07 | 0.000001 | 1.918435e-07 | 2.707124e-11 | 5.757583e-10 | 1.224538e-08 | 1.235436e-07 | 3.809139e-07 |

| 4 | 2.267224 | 0.288494 | 0.109154 | 0.004331 | 0.043882 | 0.002036 | 0.000365 | 0.022409 | 0.003295 | 0.005585 | ... | 0.000002 | 3.614792e-07 | 3.017973e-07 | 0.000001 | 1.915368e-07 | 2.701355e-11 | 5.748966e-10 | 1.223483e-08 | 1.234651e-07 | 3.808123e-07 |

5 rows × 66 columns

The sample() method also comes with the concs argument that controls the sample output. Setting concs=True returns only concentration variables, while setting concs=False returns the equilibrium constant variables and any additional variables.

[26]:

samples = conc_achr.sample(10, concs=False)

print(samples.columns)

Index(['ade_c', 'adn_c', 'imp_c', 'prpp_c', 'nh3_c', 'glc__D_c', 'g6p_c',

'adp_c', 'atp_c', 'Keq_HEX1',

...

'pfk_T0_c', 'Keq_PFK_L', 'pfk_T1_c', 'Keq_PFK_T1', 'pfk_T2_c',

'Keq_PFK_T2', 'pfk_T3_c', 'Keq_PFK_T3', 'pfk_T4_c', 'Keq_PFK_T4'],

dtype='object', length=114)

The ConcOptGPSampler has an additional processes argument that specifies how many processes are used to create parallel sampling chains. The number of processes should be in the order of available CPU cores for maximum efficiency. As noted before, the class initialization can take up to a few minutes due to generation of initial search directions. On the other hand, sampling is quicker.

[27]:

conc_optgp = ConcOptGPSampler(conc_solver, processes=4, seed=5)

For the ConcOptGPSampler, the number of samples should be a multiple of the number of processes. Otherwise, the number is increased automatically to the nearest multiple.

[28]:

samples = conc_optgp.sample(10)

print("Number of samples generated: {0}".format(len(samples)))

Number of samples generated: 12

7.3.2.2. Batch sampling

Sampler objects are made for generating billions of samples, however using the sampling functions might quickly fill up the computer RAM when working with genome-scale models.

In this scenario, the batch method of the sampler objects might be useful. The batch method takes two arguments: the number of samples in each batch and the number of batches.

Suppose the concentration of ATP, ADP and AMP are unknown. The batch sampler could be used to generate 10 batches of feasible concentrations with 100 samples each. The samples could be averaged to get the mean metabolite concentrations per batch. Finally, the mean metabolite concentrations and standard deviation could be calculated.

[29]:

# Remove current initial conditions for example

conc_solver.model.metabolites.atp_c.ic = None

conc_solver.model.metabolites.adp_c.ic = None

conc_solver.model.metabolites.amp_c.ic = None

# Set up concentration sampling problem

conc_solver.setup_sampling_problem(

conc_percent_deviation=0.5,

Keq_percent_deviation=0)

# Get batch samples

conc_optgp = ConcOptGPSampler(conc_solver, processes=1, seed=5)

batch_samples = [sample for sample in conc_optgp.batch(100, 10)]

# Determine average metabolite concentrations per batch

for met in ["atp_c", "adp_c", "amp_c"]:

met = conc_solver.model.metabolites.get_by_id(met)

per_batch_axp_ave = [

np.mean(sample[met.id])

for sample in batch_samples]

print("Ave. {2} concentration: {0:.5f} +- {1:.5f}".format(

np.mean(per_batch_axp_ave), np.std(per_batch_axp_ave), met.id))

met.ic = np.mean(per_batch_axp_ave)

Ave. atp_c concentration: 2.17516 +- 0.10551

Ave. adp_c concentration: 0.24789 +- 0.01742

Ave. amp_c concentration: 0.12479 +- 0.00738